Image Fusion

Image fusion is the process of putting together images from different sources with different input qualities so that you can learn something better. It is possible to improve the quality of previously poor images using the image fusion process, particularly in no-reference image quality enhancement techniques. The image that had been damaged was the only thing used to make the inputs and weight measures for the fusion-based strategy. Four-weight maps can make it easier to see things far away when the medium makes it hard to see them because it scatters and absorbs light. Two inputs show how the colours and contrast of the original underwater image or frame have been changed. These are used to get around the things that can’t be done underwater. To use a single image, you don’t need any special tools, to be underwater, or to know how the scene is put together. The Fusion framework helps keep frames in sync with each other in terms of time by keeping edges and reducing noise levels. Real-time applications can now use better images and videos with less noise, better ways to show dark areas, higher global contrast, and better edges and fine details. These changes can also help applications that work in real-time.

Why Image Fusion

The field of multi-sensor data fusion has evolved to the point where it requires more general and formal solutions for various application scenarios. When it comes to image processing, there are times when you need an image that contains a great deal of spatial information as well as a great deal of spectral information. Knowing this is essential for work involving remote sensing. However, the instruments cannot provide this information because of how they were constructed or utilised. Data fusion is one approach that can be taken to address this issue.

Benefits of Image Fusion

Image fusion has several advantages in image processing applications, some of which are listed below.

- High accuracy

- High Reliability

- Fast acquisition of information

- Cost-effective.

There is a significant difference between the atmosphere above and outside the water and the atmosphere above and below the water. The colours blue and green predominate in the majority of the photographs that are produced by underwater photography. It isn’t easy to see things underwater due to the physical characteristics of the environment, for example. Because light is attenuated when it passes through water, images captured while underwater is not as crisp. As the distance and depth increase, the light becomes dimmer and dimmer due to absorption and scattering processes. When light is scattered, its path is altered, but it loses a significant amount of the energy that makes it visible when absorbed. There is less contrast in the scene due to a small amount of light being scattered back from the medium along the line of sight. The underwater medium creates scenes with low contrast, giving the impression that things in the distance are shrouded in mist. In the water of a typical sea, it is difficult to differentiate between things that are longer than 10 metres; as the water gets deeper, the colours become less vibrant. Additionally, it is difficult to tell the difference between things that are longer than 10 metres.

The three main parts of an enhancing strategy are the definition of weight measures, the multi-scale fusion of the inputs, weight measures, and the assignment of inputs (which involves deriving the inputs from the original underwater image).

Inputs

For fusion algorithms to work well, they need well-fitted inputs and weights. The fusion method differs from most others because it only uses one damaged image (but none designed for underwater scenes). Image fusion combines two or more images while keeping their most important parts.

Weight measures

The weight measurements must consider how the output will look after it has been fixed. We argue that image restoration is closely related to how colours look. This makes it hard to use simple per-pixel blending to combine measurable values like salient features, local and global contrast, and exposedness without making artefacts. Images with more pixels that are heavier. The laplacian weight, the local contrast weight, and the saliency weight are considered.

Fusion

The improved version of the image is obtained by fusing the defined inputs with the weight measures at each pixel location. This results in an enhanced version of the image.

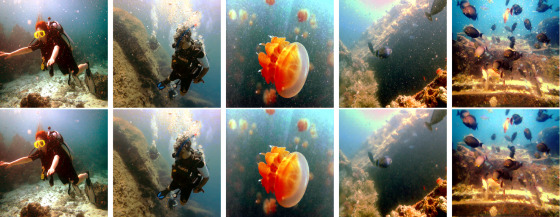

The methodology is applied to numerous underwater images in the experiment, and the performance is tested. The images for the experiments are collected from the Underwater Image Enhancement benchmark dataset (UIEB). The UIEB comprises two subsets: the first contains 890 raw underwater images and high-quality reference images, and the second contains 60 challenging underwater images. Figure 1 presents a selection of underwater images and the results obtained by applying the methodology discussed previously to conduct a qualitative evaluation. The images on the left side of Figure 1 are blurry, and most things under the water can’t be seen clearly. So, the object detection programmes couldn’t find the smaller things in the image, making recognising things harder. The fusion process took the haze out of the picture, and now you can see even the smallest objects and other particles that were hidden in the picture’s background. Because the images made by this pre-processing method are so good, they can be used in real-time applications.

The fusion technique that is used to improve underwater images can be applied in a variety of contexts. Most applications implement this fusion procedure as a pre-processing strategy to enhance the quality of underwater images. Two of the applications are mentioned here, and how they are used in real-world.

Fish detection and tracking

There is a lot of software for Android and iOS devices that can help you identify fish. This software can be your tour guide through the world of fish. Many different kinds of people, from anglers to scuba divers, can use these apps for different tasks. These apps have a lot of pictures and specific information about each fish, like how deep you should dive and where you should go to catch the most fish. You’ll be glad to know that there are apps for both iOS and Android that can help you identify a fish right away. A few of these mobile applications are picture fish, FishVerify, Fishidy, FishBrain etc.

Coral-reef monitoring

Coral reefs keep beaches safe from storms and erosion, create jobs for locals, and give people a place to play. They can also be used to make new foods and medicines. Reefs provide food, income, and shelter for more than 500 million people. Local businesses make hundreds of millions of dollars from people who fish, dive, and snorkel on and near reefs. It is thought that the net economic value of the world’s coral reefs is close to tens of billions of dollars. Underwater Coral Reef is a beautiful, easy-to-use mobile application that lets you customise your device. Underwater Coral Reef is compatible with almost all devices, doesn’t need to be connected to the Internet all the time, uses little battery, and has simple settings for the user interface.

In addition to these applications, the image enhancement strategy’s fusion procedure is used in sea cucumber identification, pipeline monitoring, and other underwater object detection and identification applications.